Victor Eliashberg

A nonclassical symbolic theory of working memory, mental computations, and mental set

Jan 08, 2009

Abstract:The paper tackles four basic questions associated with human brain as a learning system. How can the brain learn to (1) mentally simulate different external memory aids, (2) perform, in principle, any mental computations using imaginary memory aids, (3) recall the real sensory and motor events and synthesize a combinatorial number of imaginary events, (4) dynamically change its mental set to match a combinatorial number of contexts? We propose a uniform answer to (1)-(4) based on the general postulate that the human neocortex processes symbolic information in a "nonclassical" way. Instead of manipulating symbols in a read/write memory, as the classical symbolic systems do, it manipulates the states of dynamical memory representing different temporary attributes of immovable symbolic structures stored in a long-term memory. The approach is formalized as the concept of E-machine. Intuitively, an E-machine is a system that deals mainly with characteristic functions representing subsets of memory pointers rather than the pointers themselves. This nonclassical symbolic paradigm is Turing universal, and, unlike the classical one, is efficiently implementable in homogeneous neural networks with temporal modulation topologically resembling that of the neocortex.

Concept of E-machine: How does a "dynamical" brain learn to process "symbolic" information? Part I

Mar 20, 2004

Abstract:The human brain has many remarkable information processing characteristics that deeply puzzle scientists and engineers. Among the most important and the most intriguing of these characteristics are the brain's broad universality as a learning system and its mysterious ability to dynamically change (reconfigure) its behavior depending on a combinatorial number of different contexts. This paper discusses a class of hypothetically brain-like dynamically reconfigurable associative learning systems that shed light on the possible nature of these brain's properties. The systems are arranged on the general principle referred to as the concept of E-machine. The paper addresses the following questions: 1. How can "dynamical" neural networks function as universal programmable "symbolic" machines? 2. What kind of a universal programmable symbolic machine can form arbitrarily complex software in the process of programming similar to the process of biological associative learning? 3. How can a universal learning machine dynamically reconfigure its software depending on a combinatorial number of possible contexts?

What Is Working Memory and Mental Imagery? A Robot that Learns to Perform Mental Computations

Sep 08, 2003

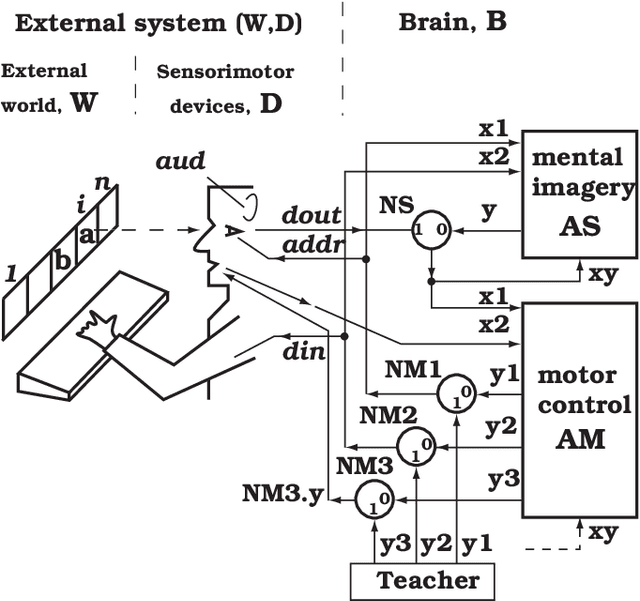

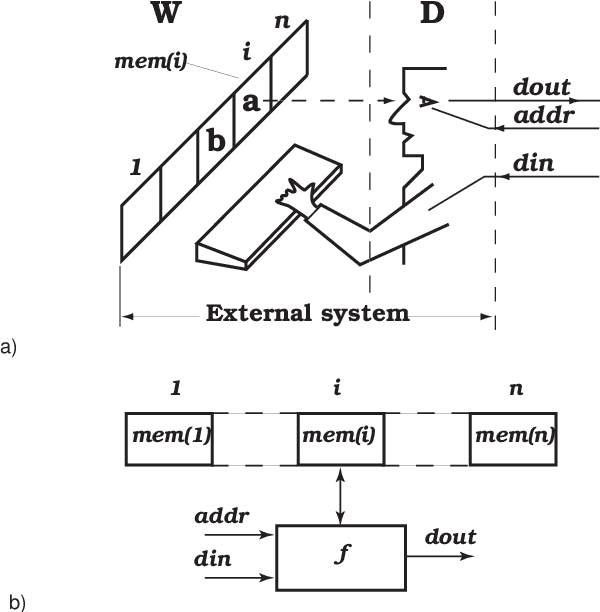

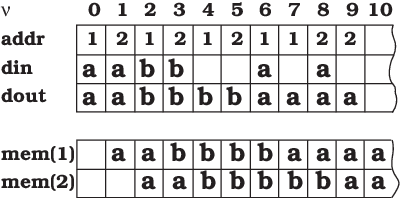

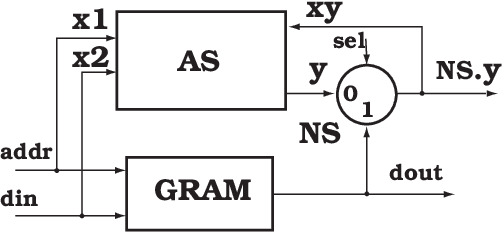

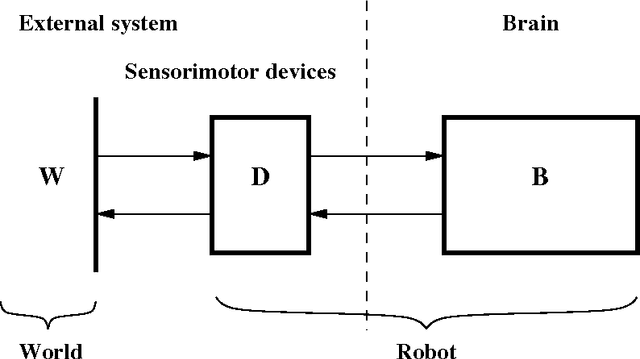

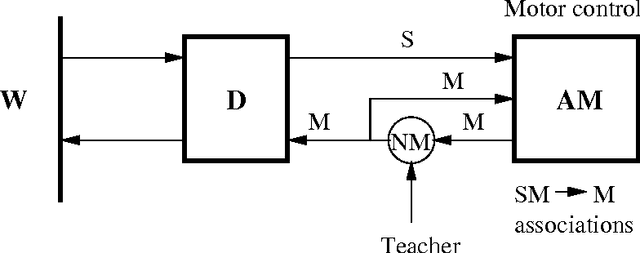

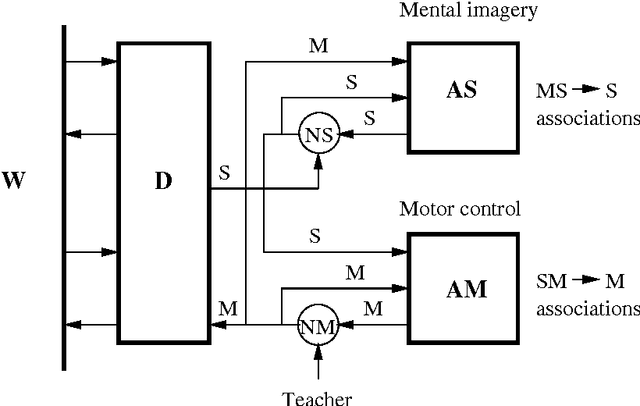

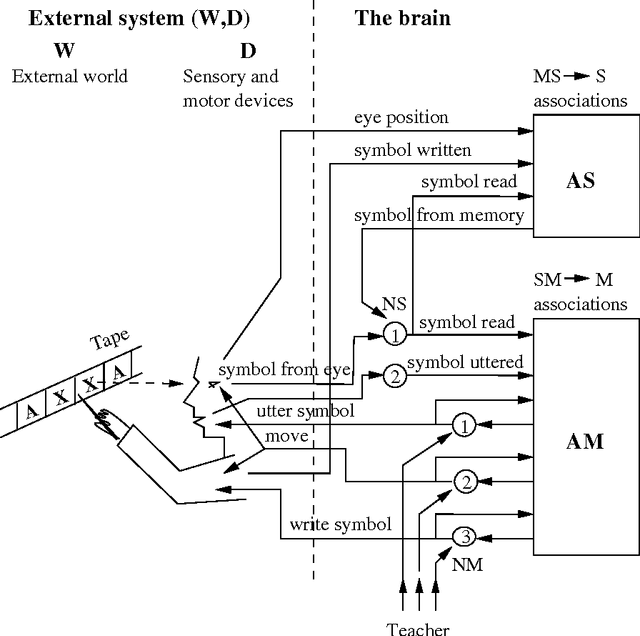

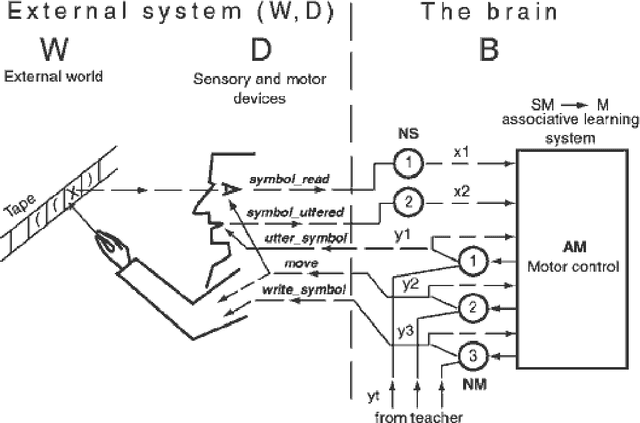

Abstract:This paper goes back to Turing (1936) and treats his machine as a cognitive model (W,D,B), where W is an "external world" represented by memory device (the tape divided into squares), and (D,B) is a simple robot that consists of the sensory-motor devices, D, and the brain, B. The robot's sensory-motor devices (the "eye", the "hand", and the "organ of speech") allow the robot to simulate the work of any Turing machine. The robot simulates the internal states of a Turing machine by "talking to itself." At the stage of training, the teacher forces the robot (by acting directly on its motor centers) to perform several examples of an algorithm with different input data presented on tape. Two effects are achieved: 1) The robot learns to perform the shown algorithm with any input data using the tape. 2) The robot learns to perform the algorithm "mentally" using an "imaginary tape." The model illustrates the simplest concept of a universal learning neurocomputer, demonstrates universality of associative learning as the mechanism of programming, and provides a simplified, but nontrivial neurobiologically plausible explanation of the phenomena of working memory and mental imagery. The model is implemented as a user-friendly program for Windows called EROBOT. The program is available at www.brain0.com/software.html.

Ensembles of Protein Molecules as Statistical Analog Computers

Aug 13, 2003

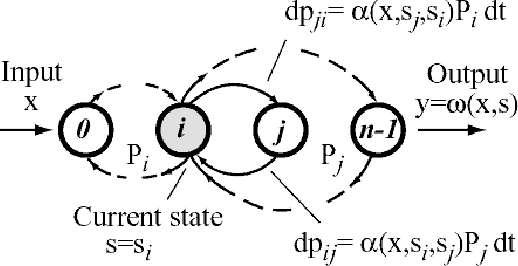

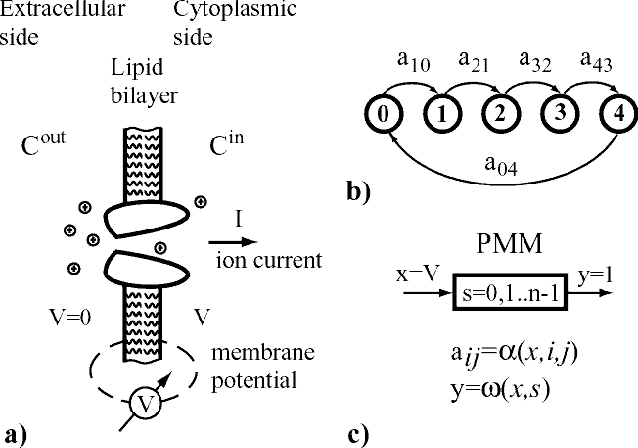

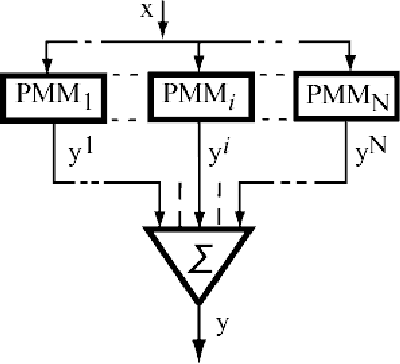

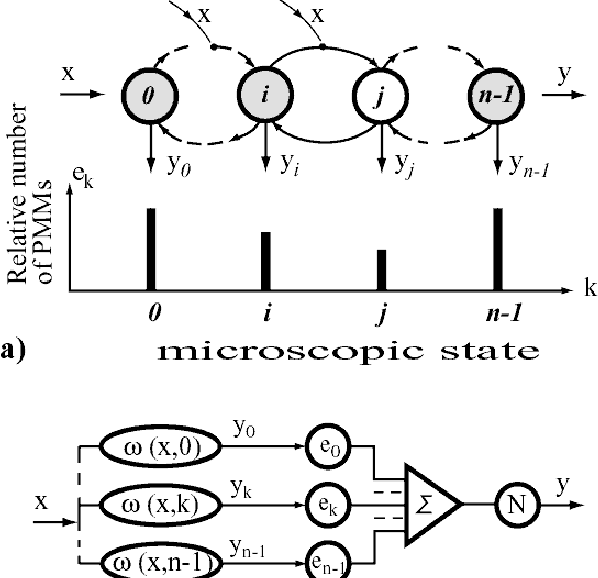

Abstract:A class of analog computers built from large numbers of microscopic probabilistic machines is discussed. It is postulated that such computers are implemented in biological systems as ensembles of protein molecules. The formalism is based on an abstract computational model referred to as Protein Molecule Machine (PMM). A PMM is a continuous-time first-order Markov system with real input and output vectors, a finite set of discrete states, and the input-dependent conditional probability densities of state transitions. The output of a PMM is a function of its input and state. The components of input vector, called generalized potentials, can be interpreted as membrane potential, and concentrations of neurotransmitters. The components of output vector, called generalized currents, can represent ion currents, and the flows of second messengers. An Ensemble of PMMs (EPMM) is a set of independent identical PMMs with the same input vector, and the output vector equal to the sum of output vectors of individual PMMs. The paper suggests that biological neurons have much more sophisticated computational resources than the presently popular models of artificial neurons.

Can the whole brain be simpler than its "parts"?

Nov 16, 2002Abstract:This is the first in a series of connected papers discussing the problem of a dynamically reconfigurable universal learning neurocomputer that could serve as a computational model for the whole human brain. The whole series is entitled "The Brain Zero Project. My Brain as a Dynamically Reconfigurable Universal Learning Neurocomputer." (For more information visit the website www.brain0.com.) This introductory paper is concerned with general methodology. Its main goal is to explain why it is critically important for both neural modeling and cognitive modeling to pay much attention to the basic requirements of the whole brain as a complex computing system. The author argues that it can be easier to develop an adequate computational model for the whole "unprogrammed" (untrained) human brain than to find adequate formal representations of some nontrivial parts of brain's performance. (In the same way as, for example, it is easier to describe the behavior of a complex analytical function than the behavior of its real and/or imaginary part.) The "curse of dimensionality" that plagues purely phenomenological ("brainless") cognitive theories is a natural penalty for an attempt to represent insufficiently large parts of brain's performance in a state space of insufficiently high dimensionality. A "partial" modeler encounters "Catch 22." An attempt to simplify a cognitive problem by artificially reducing its dimensionality makes the problem more difficult.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge